This Reddit User Built a Donut by Letting Claude Watch a Tutorial

TL;DR: Reddit user u/cerspense had Claude Opus 4.6 complete the full Blender Donut Tutorial autonomously. Gemini API acted as the eyes, parsing the video into a structured JSON plan. Claude wrote execution steps and coded missing tools on the fly. Visual verification compared the Blender viewport to tutorial screenshots. Unity and Unreal integrations are next.

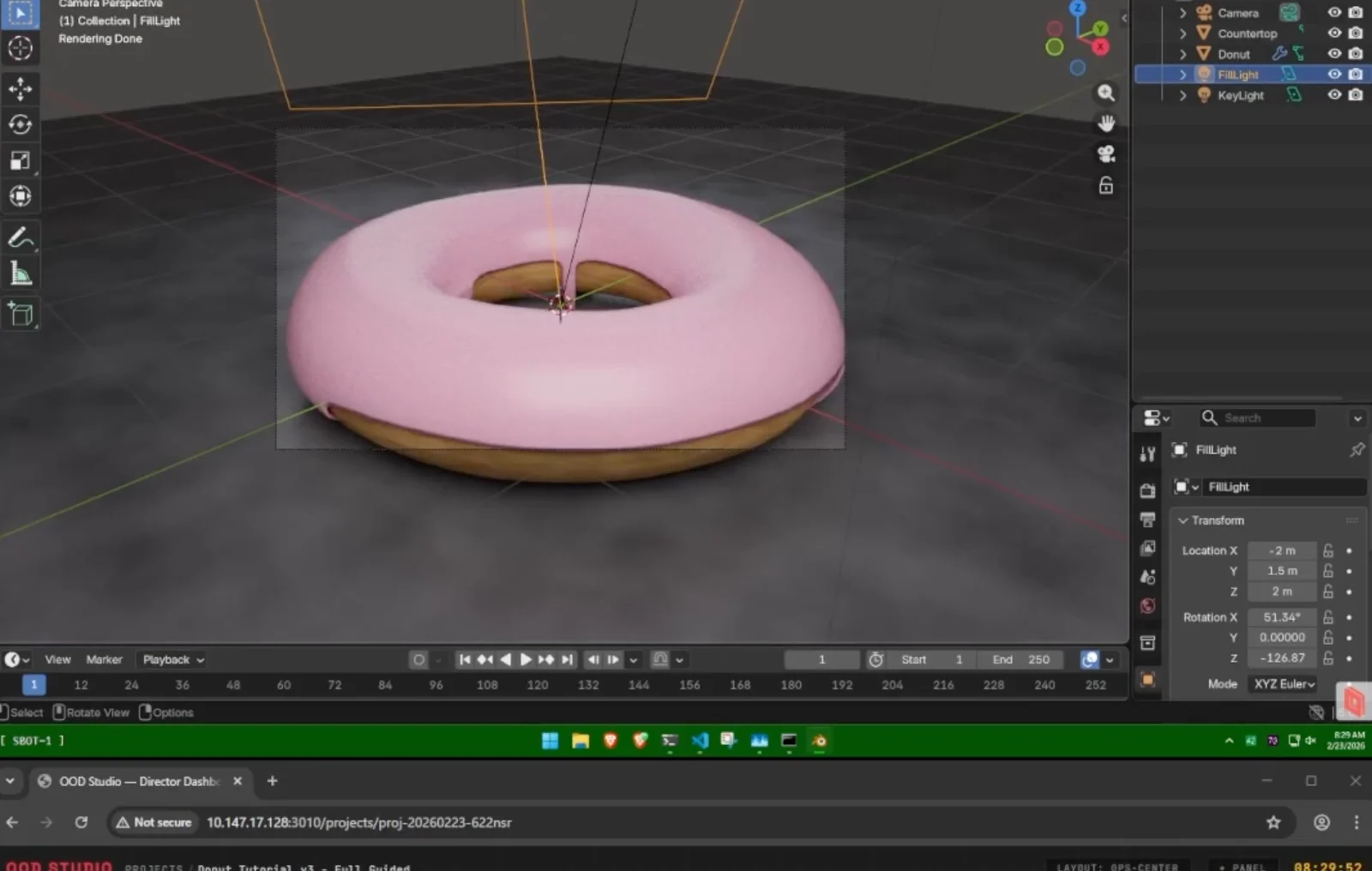

In a viral experiment that has the AI community buzzing, a developer known as u/cerspense on Reddit recently demonstrated a breakthrough in autonomous agents. Using a custom multi-agent system powered by Claude Opus 4.6, they successfully enabled an AI to "watch" and complete the legendary Blender Donut Tutorial with zero human intervention.

You can view the original project and discussion here: I had Opus 4.6 complete the entire Blender Donut Tutorial autonomously

For the uninitiated, the "Blender Donut" is the universal rite of passage for 3D artists. It involves complex mesh modeling, sculpting, texture painting, and lighting—tasks that usually take humans hours of focused manual labor.

How the "AI Artist" Built It

The developer didn't just give Claude a transcript; they built a sophisticated orchestration layer that allowed the AI to "see" and "act" within the software.

The "Eyes" (Gemini API): Since Claude cannot natively stream YouTube, the developer used the Gemini API to analyze the video. Gemini created a structured JSON plan, identifying key milestones and the exact timestamps where the AI should take screenshots to verify its progress.

The "Brain" (Claude Opus 4.6): Claude acted as the lead architect. It took the plan and the video transcript to generate a step-by-step execution strategy.

Self-Improving Tools: Perhaps most impressively, if the system realized it lacked a specific "tool" or script to perform a Blender command, it used Claude to code the missing tool on the fly and add it to its library.

Visual Verification: The system used "visual and programmatic verification" at every stage, comparing its live Blender viewport against the tutorial screenshots to ensure it hadn't made a mistake.

What This Means for Unity Developers

While this experiment happened in Blender, the implications for Unity are massive. The creator has already confirmed they are working on an even better integration for Unreal Engine 5 and Unity, signaling a shift from "AI assistants" to "AI colleagues."

1. The Death of "How-To" Frustration

We’ve all been there: following a 40-minute tutorial on a specific Unity package (like Sentis or Netcode for GameObjects) only to get stuck on a tiny version mismatch. In an agentic workflow, you could simply give the AI a link to the documentation or a video tutorial. The agent would "watch" it, identify the necessary components, and set up the Scene, Prefabs, and C# scripts for you.

2. Autonomous Asset Pipelines

Unity developers often spend hours jumping between Blender and the Editor. This experiment proves that an AI can handle the entire bridge: modeling the asset in Blender, exporting it, and potentially setting up the Material and Physics Collider in Unity autonomously.

3. Agentic Debugging

Instead of just asking Claude to "fix this script," you could theoretically let an agent "look" at your Inspector window, verify your Layer Collision Matrix, and check your Tag assignments—things LLMs traditionally struggle with because they can't "see" your Unity project hierarchy.

The Cost of Innovation

While the technical feat is massive, the Reddit thread quickly turned to the "reality check" of modern high-end AI: the price tag.

Because Claude Opus 4.6 is a high-token-usage model—and the project required constant "thinking" and context-heavy image analysis—commenters joked that this was a "$200 donut." For an indie dev, this is currently an expensive way to work, but as "distilled" models become cheaper, this will eventually become a standard part of the Unity Editor.

Why This Is a Big Deal

This isn't just about 3D modeling. This experiment proves a concept called General Purpose Agentic Workflows.

The creator noted that once the system learns a workflow, it is saved into a "shared registry." This means the AI isn't just "doing" the task; it's learning the skill.

"The concept is out of Pandora's box now," one Redditor noted. "People will create streamlined versions of this in months."

We are rapidly approaching a future where, instead of watching a 4-hour tutorial yourself, you simply give the link to your AI agent and tell it: "Learn this, and then build it for me in my Unity project."