Addressing AI Use in Game Development

TL;DR

I built a Unity game demo end to end with AI in two hours, posted it on LinkedIn, and got the kind of hate I expected on Reddit, not LinkedIn. The hate has real roots: the layoffs, the training-data theft, and the AI slop on Steam are all genuine. But most of it gets aimed at solo devs and indie creators, when the actual offenders are Activision, EA, and Ubisoft, who can afford artists and chose AI anyway. I am not stopping. I will keep prototyping, teaching, and posting.

I built a small Unity game demo end to end using only AI: code, art, scene setup, the whole pipeline. The whole point of the experiment was to answer one question: how fast can you actually ship a playable Unity game from scratch in 2026 if you let AI do the work? I used GameLab Studio for the art and Unity MCP for the engine work (I wrote a full tutorial on the free MCP setup if you want to replicate it).

Then I posted the demo on LinkedIn, and that is where things got strange.

LinkedIn is usually the most welcoming social platform for a build-in-public post. Everyone is signed in with their real name, and the whole point of being on LinkedIn is to look hireable. The incentive structure is to be civil. So I was genuinely surprised by the negative feedback I got. If this had been Reddit I would have shrugged it off, but LinkedIn made me sit down and actually research where the hate toward AI in game development is coming from.

Table of contents

- Stating the obvious

- What I actually use AI for

- Where AI for games actually stands in 2026

- What I actually want from an AI art tool

- Is using AI in games actually wrong?

- Where the criticism is fair

- And where it should actually be aimed

- What I will keep doing despite the comments

Stating the obvious

Here is what I want to be obvious before I go any further. I cannot blame the people who left negative comments on my post, and I was not mad while reading them. People are losing their jobs in 2026, and even though I do not think AI has actually replaced anyone yet, the fearmongering around it is enough on its own to push companies into layoffs. That is the climate every game developer is reading the news in right now.

When I usually post something on LinkedIn that gets hostile, my instinct is to delete it. You do not want a thread of negative replies sitting on your profile where a future client or employer can use it against you. This time I left it up. People needed somewhere to drop that energy, and my post happened to be the place. I would rather absorb the venting than pretend the anger is not real.

What I actually use AI for

I am angry at the same things everyone else in this industry is angry at: the market, the layoffs, the way the entire industry has been forced into a defensive crouch over the past three years. I cannot ignore any of that. What I do with that anger is the part where I split off from the LinkedIn comment section.

What AI is actually great for, in my day-to-day, is prototyping. My main work is the Darko Unity brand and tutoring Unity students, which means I do not have the bandwidth to commit six months to a full game. But I have always had ideas I wanted to test, like a taekwondo game with proper attacks, defenses, and counter-attacks, and AI lets me put a playable version of one of them in front of myself in a single afternoon.

Where AI for games actually stands in 2026

I keep an eye on AI for game development on my own time, because I am genuinely interested in where the field is going. The headline release this year has been Genie 3, Google DeepMind's world model that generates explorable 3D environments from a text prompt. I watched a lot of Genie 3 videos. I never paid for it, because two things stopped me cold.

The first is the price. Genie 3 is locked behind Google's AI Ultra plan at 250 dollars a month, US-only. For 250 dollars a month I can hire an actual freelance artist for a few hours, which produces an asset I can ship instead of a 60-second walkthrough I cannot. The second is that the output is slop. The worlds look impressive in the marketing trailers and fall apart the moment you actually navigate one. They are 720p, 24fps, and the model only stays consistent for a couple of minutes. You can walk around, turn around, and the world has already forgotten what you saw. It is a tech demo, not a tool.

The bigger problem is what you are allowed to do with the output. You cannot take a Genie 3 world and drop it into Unity or Unreal. The whole experience lives inside Google's product, behind their paywall, on their servers. As a solo developer that is a non-starter. The same trajectory happened with Sora: a year of hype, then quiet, because nobody could actually use the output in a real pipeline.

The work that is actually moving the needle for game developers is open source, and most of it is coming out of China. Tencent's Hunyuan 3D is free under an Apache 2.0 license, generates a mesh from text or an image in seconds, and exports straight to GLB and USDZ files that Unity and Unreal both consume natively. Their newer HY-World 2 goes further and outputs full meshes, 3D Gaussian splats, point clouds, and depth maps that drop directly into Unity, Unreal, Blender, and Isaac Sim. Both are open-weights releases, though only Hunyuan 3D is Apache 2.0; HY-World 2 ships under a custom Tencent community license, so check the terms before shipping commercially.

That is the real split in AI for games right now. The Western flagship products are tech demos behind expensive paywalls with no export path. The Chinese open-source models are the thing solo developers can actually use today.

What I actually want from an AI art tool

The first thing that became obvious when I started building the demo is where AI in game development actually lacks in 2026, and where it does not. Code generation is fine. Not at the level of CRUD apps and web development yet, but pretty good. Any frontier model, Anthropic, OpenAI, whoever, will write you Unity C# that compiles and behaves. Asset generation is the part that is way behind, and the reason is training data. Almost every game ever shipped is proprietary software, the source assets never leave the studio, and the public datasets these models train on do not have the volume of game-specific material that the web has for HTML and CSS.

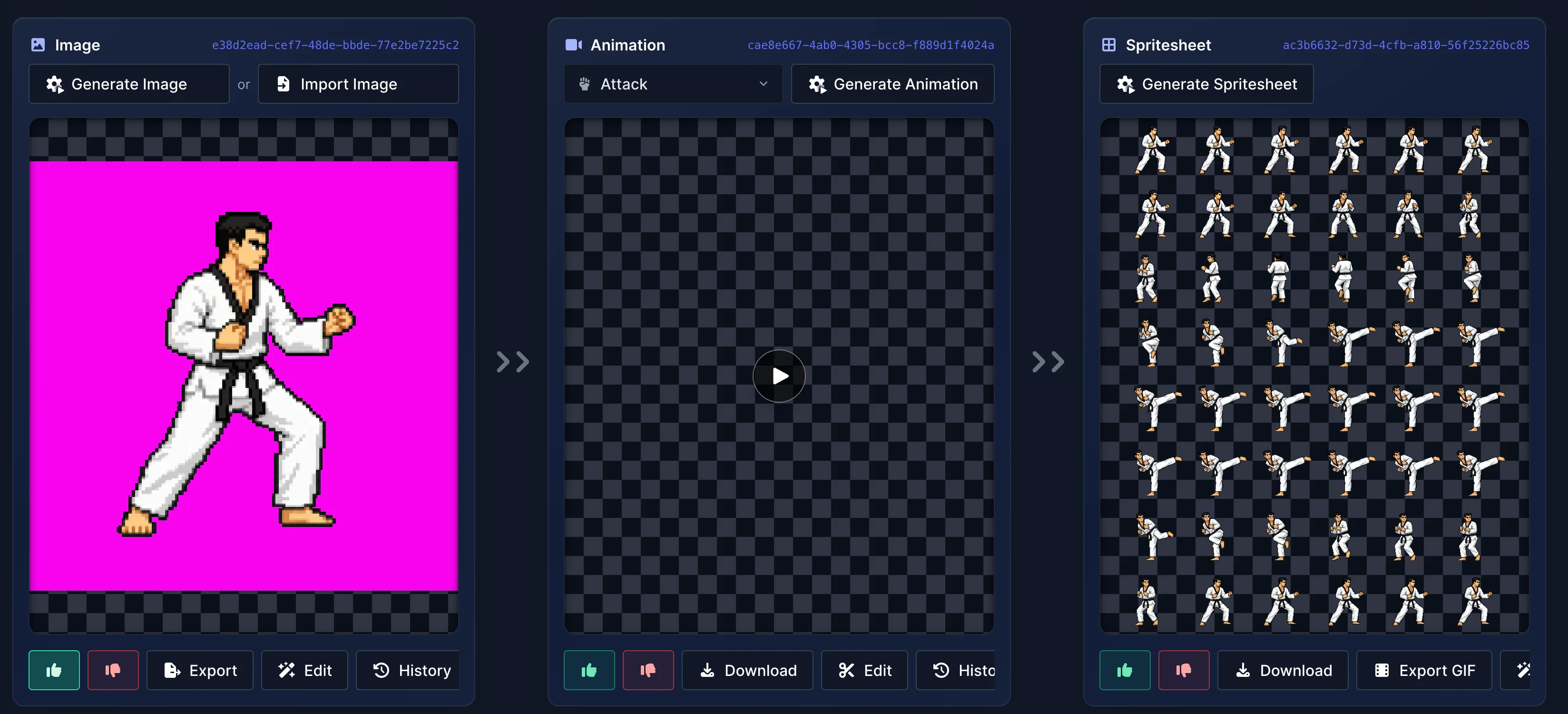

The single biggest gap inside asset generation is style consistency. If you generate a character with one prompt and a second character with another, they are going to look like they came from two different games. That is the wall I hit when I tried OpenAI's Image 2.0 and Gemini. Both produce nice individual images. Neither could give me a second sprite that actually felt like it belonged next to the first. Without that, you cannot ship a game.

This is the part where GameLab Studio earns its place in my stack. Their workflow is built around consistency from the ground up. You generate a base sprite of a character, and from that base sprite the tool generates animations, and from those animations it generates the final spritesheet. Every step inherits the style of the one before it. When I built the demo I generated a taekwondo character once and then layered an attack animation, a dodge animation, and a walk animation on top of it without any of them drifting. Then I did the same thing for the enemy. The results stayed coherent end to end.

Where the tool stops being magical is in backgrounds. I tried to generate parallax backgrounds, the kind that need to tile cleanly so they can scroll horizontally without a visible seam, and the results were rough. You can see the seam in the shot below where the field, the trees, and the mountains do not line up across the repeat boundary.

GameLab Studio ships with a built-in editor, so if you are an actual artist you can clean those edges yourself. That is the real positioning of the tool in my head. For prototyping, it is excellent on its own. For shipping, it is the kind of tool a real artist would use to skip most of the busywork and arrive at the part that actually needs human judgment.

I do not know what GameLab Studio is doing under the hood to keep the consistency. My guess is some internal pipeline of fine-tuning and reference conditioning, because the off-the-shelf models I tried cannot do this on their own. Whatever it is, it is the difference between a tool I can use to ship a game and a model I can only use to make pretty screenshots.

Is using AI in games actually wrong?

This is the question I had to sit with after the LinkedIn comments came in. I am out here making content about how to use AI, effectively selling AI to other developers, while at the same time people in this industry are losing their jobs. That is uncomfortable. I had to reconsider whether what I am doing is moral, because pretending the moral question does not exist is the easy way out.

The honest version is that AI is not directly putting game developers out of a job. It is doing it indirectly. The money has not disappeared, it has moved. Investors who used to fund game studios are now funding AI companies instead. So less capital flows into game development, fewer games get greenlit, and headcount goes down. AI did not fire those people. The investors redirected the river, and the people who built games for a living got dried out downstream. That is the actual mechanism, and it is the one nobody wants to talk about because it does not have a clean villain.

There is a bigger thing on top of that. I think we are inside a bubble that is going to explode, and when it does the damage is not going to stay inside one industry. It will hit AI, games, software, advertising, every industry that has been pulled into the same gravity well of capital chasing AI. I am genuinely worried about that. Not in a doom-poster way, just in the sense that bubbles popping never stay contained.

The thing that bothers me most is the value question. People are using these tools to make things, sure. The question is whether what gets made is actually worth anything. I look at most of what is being generated and I do not see value. I see slop. I see people generating videos of cats and Donald Trump and I see the energy and water bill behind them. The water and electricity is real. The output, in most cases, is not.

Sam Altman keeps saying 2026 is the year of productivity. I do not know what productivity he is talking about. You can watch a movie at 2x speed, and it is technically more efficient, but you have not become more productive in any way that matters. You have not understood the movie better. You have not made better movies. You have just consumed faster. That is the same thing happening with AI right now. Faster does not mean better, more output does not mean more value, and the gap between productivity and value is the part the discourse is skipping.

So when I ask whether using AI in games is wrong, the honest answer is that I do not have a clean one. What I keep coming back to is that the tool is not the problem. The way it is being used at scale, the capital reshuffle behind it, and the slop economy that has formed on top of it, are all real problems. I just do not think me prototyping a taekwondo game by myself is the part of that problem worth aiming at.

Where the criticism is fair

Before I get to where the heat should actually be aimed, I want to give the criticism the credit it has earned. There are real issues with how AI got built and how it is being used, and pretending otherwise is dishonest.

The first one is training data. Most of the image and code models were trained on other people's work without consent. Artists, illustrators, and writers had their portfolios scraped and turned into the very thing that competes with them. That is a legitimate grievance, and "the law allows it" is not the same as "this was OK." Anyone using these tools should at least be honest about that part.

The second one is the layoffs. The mechanism is indirect, as I said earlier, but the result is the same. People who built games for a living are applying for jobs that do not exist anymore, and AI is one of the reasons fewer of those jobs exist. Not the only reason, but one of them.

The third one is the slop. Steam tightened its "Made With AI" disclosure rules in January 2026 (the original policy went live in January 2024) because the storefront was filling up with one-prompt asset flips. Players were not making that up. The label exists because the problem is real, and review-bombing campaigns like the one against Shrine's Legacy, where a game made entirely by human artists got mass-bombed by users assuming AI, only happen because the broader fear is grounded enough that even the false accusations look plausible.

I am not going to pretend any of that is invented. The part I disagree with is the framing, the part where solo devs and indie creators get treated as the face of a problem that is mostly being driven somewhere else. That is the next section.

And where it should actually be aimed

Most of the AI hate I see online lands on the wrong target. Solo devs prototyping games at home are not the reason artists are losing jobs in this industry. The reason is the publishers who already had an artist payroll and decided they preferred a model.

Activision is the loudest example. In late 2023 they sold an AI-generated CoD skin inside Modern Warfare 3 (the Yokai's Wrath bundle), and in 2025 they confirmed on the Steam pages that Black Ops 6 and Warzone use generative AI for in-game assets. While that was happening they were also laying off art and design staff. EA has its own version, with a publicly announced partnership with the team behind Stable Diffusion to "creatively direct the generation of game content" across art and 3D environments. Ubisoft has been pushing Ghostwriter for NPC dialogue since 2023 and signed onto Nvidia's AI NPC initiative the year after. These are the companies with budgets to hire any artist they want. They chose AI anyway.

That is where the conversation should be. Not at the indie dev who could not afford a 2D artist on Fiverr and used a tool to ship a prototype. Not at the student debugging their first Unity character controller with ChatGPT. The angry comments on my LinkedIn post would land much harder if they were aimed at the publishers who are actually replacing artists, instead of at the developers who never had artists to replace in the first place.

The internal artist sentiment data backs this up. Per the GDC 2026 survey, 64 percent of visual and technical artists view generative AI negatively, the highest of any role. They are not mad in the abstract, they are mad because they can see what is happening at their own companies. That is a fight worth having. It is just not a fight with me.

What I will keep doing despite the comments

The first thing I am keeping is being up to date with AI tooling. Whether the bubble pops or not, the technology itself is going to stay. The dot-com bubble in 2000 destroyed companies and capital, but the internet did not go away after it. It became the default substrate everything else got built on. AI is on the same trajectory. Refusing to learn the tools because you do not like the investors chasing them is just refusing to learn the tools. I am not going to do that.

The second thing is using AI for prototyping without apologizing for it. The argument that AI generates generic, derivative content does not survive contact with the indie scene that already existed before AI. How many medieval games do we have where every single one is Viking, Nordic, or some flavor of generic fantasy? Witcher 3 stood out exactly because it actually committed to a Slavic and Eastern European atmosphere instead of recycling the same dark forest with elves. Kingdom Come Deliverance 2 stood out for the same reason. The rest of the medieval shelf blends into one long Viking helmet. Indie games on Reddit are even worse, every other one is a side-scroller starring a generic animal main character, usually a bunny, with no reason for that bunny to exist. The artists were already copying each other before AI showed up. Pretending the alternative to AI is some authentic human art renaissance is a fantasy.

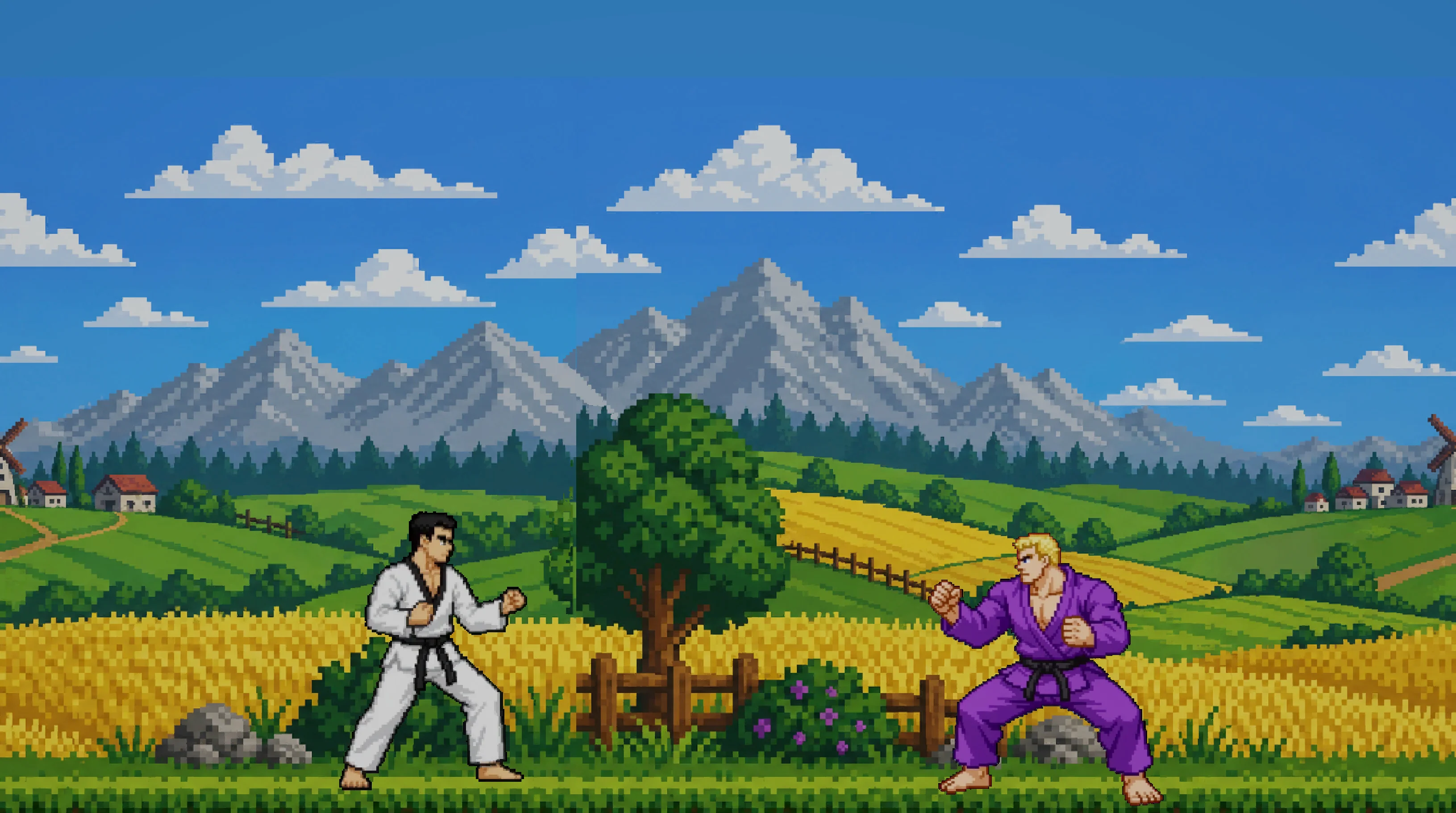

What I am defending is the opposite of generic. Look at my own demo. The player is wearing an actual World Taekwondo dobok, a uniform I know in detail because I trained taekwondo for years. He uses a real battle stance, a real back kick, a real front kick, and a real dodge animation. The only one I would push back on is the dodge, because the model pulled the back leg too high, which in a real fight is not optimal, you lose your gravity and you eat a counter.

The enemy wears a judo kimono and is built heavier than the player, so his entire game plan is to close the distance and body slam. I skipped the actual grappling animations to keep the demo simple. The combat is Dark Souls inspired, so it rewards patience, timing, and positioning. Positioning is a real thing in taekwondo, where you stand on the mat is part of the strategy, and that is the part I actually wanted to put on screen.

The point is not "AI generated all of this." The point is that I shaped every prompt with real context I lived through, and the result is a prototype that has more thought behind it than most random-bunny-side-scrollers shipping on Steam right now. That is what I am going to keep doing. I will keep using AI as the production layer, and I will keep being the person who decides what to put in front of it. No comment section is going to talk me out of that.